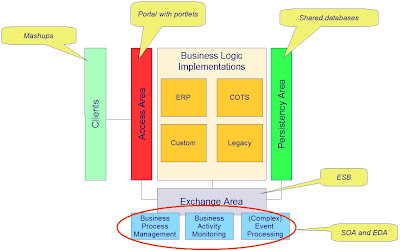

How to obtain high autonomy and low mutual dependencies of the functional entities in an organization with regard to message interaction and service exposure of SOA? In this pattern I'll describe a model for a federated multi-bus SOA-platform that satisfies the desired autonomies and low mutual dependencies in complex organizations.

The Enterprise Service Bus, what’s in a name

First of all: what is an ESB? Despite of what the market tries to make us believe, an ESB is not for sale. An ESB is an enterprise wide role of a service bus. It's the enterprise itself who decides about the role of the service bus products offered by the vendors. In this pattern, I will not mention the Enterprise Service Bus, as this name does not clearly qualify its position in a federated service bus infrastructure; is it the corporate level service bus or is it the entire service bus infrastructure of the whole enterprise? I take the short way: avoiding the acronym. I will only use the acronym when referring to marketed service bus products.

Levels of federation

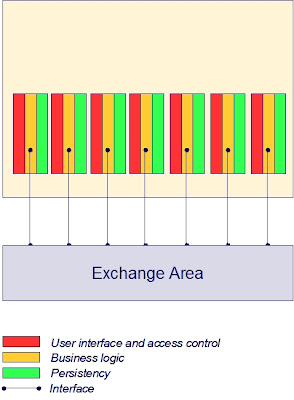

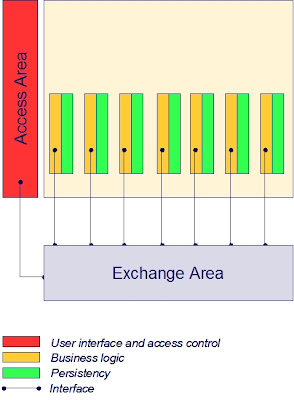

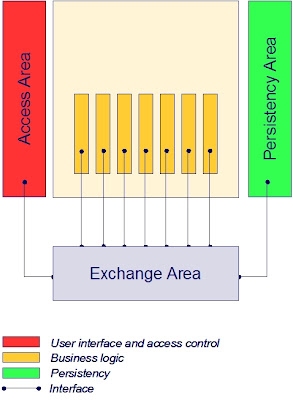

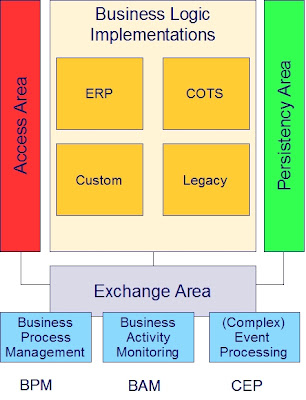

This pattern suggests four levels of interest in a federated service bus infrastructure consisting of multiple logical buses:

- Application level – multiple application buses per domain, one for each application

- Domain level – multiple domain buses, one for each domain

- Corporate (enterprise) level – one corporate bus for the enterprise

- External level – one external gateway for the enterprise

The application level service buses support fine grained application level processes and activity monitoring. Each application is bound to its own logical bus. In practice this boundary will typically be implemented by an application name space on an application server using JMS (java) or WCF (.net). Complex distributed multi-technology applications may take advantage of a dedicated service bus implementation like SonicESB or JBoss.

A domain is a functional cohesive entity, like Human Resource Management, Finance, Logistics, Sales, Acquisition. Service buses at this level support cross application processes and activity monitoring within the boundaries of the distinct domains. Domains also expose domain generic services to be accessed by the domain’s applications.

The corporate level service bus supports cross domain processes and activity monitoring. At the corporate level also enterprise wide generic services are exposed to be accessed by the domains.

The external level service bus supports interaction with the company’s outside world, the business partners, consumers and suppliers. The external service bus exposes external services to the company and supports exposing the company’s services tot the external world.

The different service buses in this pattern need not be different products or different instances of a product. Depending on the organization’s standardization policies, solutions may vary from one multi-tenant product implementation, multi-instance implementations of one product, multi-product implementations to any combination of these.

Services and messages

A distinction is made between services and messages. In this pattern services are synchronously callable modules to be called from within process steps and messages are asynchronously passable data objects between process steps.

Service interactions are by nature tightly coupled: sender and receiver know each other and must both be available at the same time of interaction; the called service influences the performance of the calling service.

Messages allow for loosely coupled interaction: sender and receiver don’t know each other; they need not be available at the same time and they do not influence each other’s performance. The sender publishes the message to a “medium”, and the interested receivers subscribe to the message. The medium is typically the publishers local service bus which - by configuration - propagates the message to the receivers’ local service buses. The receivers subscribe to the message in their own local environment.

How to avoid spaghetti?

“If you like pasta but hate to eat spaghetti, try lasagna.”

In this pattern a layered structure of interaction is promoted to maintain the desired boundaries of autonomy and yet structure controllability. This layered structure leads to a hierarchical parent-child communication approach. A child has only one parent (it's only a metaphor, folks), a parent may have multiple children. In this federated service bus pattern parents and children are defined as follows:

- An application is a child of one domain (n:1); a domain is the parent of one ore more applications (1:n)

- A domain is a child of the enterprise (n:1); the enterprise is the parent of one ore more domains (1:n)

- The enterprise (corporate level) is a child of the external level (1:1); the external level is the parent of the enterprise (1:1)

The parent-child approach for communication in this pattern is comparable to the fundamental principles used in structured design approaches, and in defining inheritance and encapsulation in object oriented environments. It creates the “universal system physics” in order to guarantee the ability to keep control over construction and modification of complex software systems (a.k.a. “avoiding spaghetti”).

This leads to the following restrictive policies in cross-boundary communications:

- Child-level processes may deliver messages to their parent’s bus

- Parent-level processes may deliver messages to their children's buses

- Downward skip-level messaging always cascade from parent bus to child bus

- Upward skip-level messaging always cascade from child bus to parent bus

- Parent-level buses may expose services to their children's buses

No other cross-boundary communication channels are allowed. Horizontal message communications between different applications always take place via the respective domain bus. And horizontal message communications between different domains always take place via the corporate bus.

Practice

From a cost-efficiency point of view it would be preferable to implement all levels of this federated model within one product- and administration environment.

Practice, however, is more subtle. Domain models will mostly be shaped on a foundation of autonomy which has organically risen from culture, history and mightiness. Domains tend to make their own choices with respect to IT-resources such as applications, tools and platforms. Especially when domains originate from fusions and merges with other companies the enterprise will have to cope with redundancy and duplicate solutions. Maintaining this redundancy for the sake of autonomy and independence has an enterprise value. Departments are more loosely coupled which improves agility and offers the possibility to excel. Maintaining redundancy might pay off better than avoiding redundancy which creates constraining dependencies.

With regard to a federated service bus infrastructure, the use of different products - if smartly delimited with regard to different independent administration environments - is no big deal anymore in these days of mature open standards. Supporting interoperability is the main focus in the current IT-industry.

The available interoperability between multiple ESB-products also supports choosing ESB-products based on the characteristics of the specific application- or domain environment. Think of products that are strong or weak in aspects like centralization (hub), decentralization (distributed), multi-tenant (logical separation), multi-instance (physical separation, clustering), device footprint, high volume message routing, back-office processing. E.g. a service bus in an environment of moving trains and gates and vending machines on stations is of quite a different characteristic than an service bus in a centralized data center environment.

By choosing a standards based ESB-solution conformed to a federated and distributed implementation model, the IT-infrastructure will mature to an enormous, agile - yet relatively cheap - business enabler for most (if not all) enterprises.