I attended the HP Software Universe in Barcelona late November 2007. And I was struck by a new insight.

All those people were talking about the life cycle of IT services and how to monitor the complex and interrelated compositions of infrastructural components to guarantee continuity.

Service Life Cycle

To explain my insight let me start with the service life cycle, on which SOA governance is founded.

There is the service strategy, where - among others - the market and the market value of the service is determined. The service portfolio and ownership must be managed and there must be a financial model to deliver and maintain the service.

Then there is the service design, where solutions are developed in terms of architecture, technology, people and processes. Processes are developed with regard to service catalog management, continuity, security, service levels and more.

The service transition includes processes like change management, configuration management, releases, planning en testing.

Finally service operation has to be governed with focus on keeping services running. This includes for instance incident mananagement, problem management and access management.

All of the above are aspects of SOA governance, aren't they? And this is exactly the scope of ITIL v3!

Insight

Where ITIL focuses for many years by definition on IT services, the term IT services can easily be replaced by "business services" or "application software services". The top level of the configuration tree in ITIL is the application. But as (IT oriented) SOA decomposes applications into service configurations and business processes are being composed out of autonomous business services within the Service Oriented Architecture domain, the governance model of ITIL can be stretched to SOA governance. With SOA the tree doesn't have to stop at the application level anymore.

Tool integration

Besides one uniform and well defined governance strategy for business-, application- and infrastructure services, there are more huge benefits to pulling ITIL in a SOA-context. These are the ITIL oriented tools.

UDDI service catalogues and BPM metadata-repositories could be merged with the Configuration Management Data Base (CMDB), which makes it possible to extend the infrastructure monitoring tools through the level of business services in an SOA and combine them with BAM tools. (BPM=Business Process Management; BAM=Business Activity Monitoring)

This integration allows for an overall end-to-end insight of the total business process till the ultimate detail level of individual infrastructure components by one single view.

E.g. the impact of a broken router can easily and instantly be traced up to multiple business process instances. Such as the violation of a specific delivery agreement with a specific business customer (if not repaired within a certain limit of time). And in case of a serious delay (agreed MTTR, Mean Time To Repair) the service desk can automatically inform the customer, offering a discount for inconvenience to keep her happy. All without any manual interference.

BTO

This exactly matches the philosophy of BTO (Business Technology Optimization), an emerging business philosophy to manage IT resources as a business rather than as a service bureau. Information Technology is rapidly changing to Business Technology: IT is no longer supporting the business, but IT is the business.

Merging SOA governance - in all aspects - with (e.g.) ITIL might even turn out to be the first crucial step toward competitive business survival in the currently manifesting revolution to a world ruled by intellectual capital based on the ultimate availability of knowledge.

"The picture"

Friday, December 14, 2007

Using ITIL for SOA Governance

Thursday, October 18, 2007

Wow! Oracle wants to buy BEA

Isn't it a wonderful world?

A few days ago I posted that infrastructure-vendors won't win from ERP-vendors with regard to SOA. And a few days later I motivated my thoughts here and here.

And now Joe McKendrick reports that Oracle (= Siebel, PeopleSoft, Hyperion, J.D. Edwards) wants to buy BEA.

Things go quicker than I expected...

Monday, October 15, 2007

SAP TechEd - We Have SOA

Read what Jeff Schneider - who recently attended the SAP TechEd in Las Vegas - has to tell.

I felt like SAP really got it. Unfortunately, I felt like most of the people at the conference didn't.That is exactly what I meant when I posted my rebellion articles on SOA out-of-the-box and "Boxed" SOA.

Monday, October 08, 2007

"Boxed" SOA

Joe McKendrick reacted on my article about SOA out-of-the-box.

But vendor offerings are limited to tools and templates.Of course he is right, I am talking about tools and templates. But let me explain myself in more detail. What I mean is that SOA - in a technical sense - is currently offered by suppliers of business solutions including the standards based infrastructure as I mentioned. When I buy a modern ERP-solution I get a populated SOA infrastructure with it, based on open standards. This open standards based infrastructure can also be used for the governance of external services and processes. It is not unwise to use these integrated out-of-the-box SOA products as a starting point and as enforcers.

And about this one of Joe:

Good SOA is ultimately the product of enlightened and savvy management, smart and well-trained people, and competitive drive. And that part will never come in a box.He is right again, but does enlightened and savvy management, smart and well-trained people, and competitive drive exclusively lead to SOA? I don't think so. That would be too easy. I think it is a prerequisite for SOA but we still must enforce SOA if we are believers... A business wise populated infrastructure with tools and templates, integrated out-of-the-box, and based on open standards will help. An unpopulated infrastructure will also help... but slower. Much, much slower!

Saturday, October 06, 2007

SOA out-of-the-box

Quote from BEA:

The idea that you can buy SOA in a box is both amusing and dangerous. Thinking that you could buy a piece of software, install it, and then say you have SOA is the amusing part. The dangerous part is what might happen to your job once you install the software and people start to realize you are nowhere near having a service-oriented architecture.And BEA continues:

The reality is that before you can implement an SOA, you’ve got to lay the groundwork: a service bus where your services will managed, a service registry for identifying services, a security framework to manage access to your services, a solid understanding of your business processes, an enterprise architecture showing your eventual goals, and most importantly, executive sponsorship for your project. Putting these pieces in place will “service enable” your enterprise and get you ready to start implementing your SOA.BEA is not the only one who says so. And BEA, among all others, forgets the most important part along the service registry: a business events registry - which is one of the most important keys to the success of SOA. Why did they forget to mention this part? Probably because they don't offer it in their product portfolio (but I may be wrong on this one).

All true I would say. But... what about ERP-vendors like SAP? SAP offers a service bus, service registry, events registry, canonical data management, business processes, services deployments (!), business monitoring, business process management, security... out-of-the-box. Yes, of course the implementation must be tuned and configured. But it's all there, out-of-the-box. The difference between infrastucture-vendors and ERP-vendors like SAP is that the infrastructure-vendors are trying to sell infrastructure to the business whilst ERP-vendors are selling business solutions to the business. Who do you think will win?

I think mid-size companies that fully rely on ERP-solutions will be the first companies with a full-fledged SOA in place. The big enterprises relying on custom development will need much more years to reach the same level of SOA maturity. They might benefit from a kick start by building their SOA around one or more open SOA based ERP-systems that are positioned as dominant business solutions at enterprise level. If they don't, the big enterprises will all get behind on their smaller competitors with regard to vital IT-maturity within the years to come.

So the idea that you can buy SOA in a box might turn out not to be as amusing as the infrastructure-vendors want us to believe. It might even turn out to be dangerous to ignore the idea. Building an unpopulated SOA infrastructure from scratch, as the infrastructure-vendors are promoting, might in the end turn into a big mistake.

Wednesday, October 03, 2007

Low-Tech Approach to Understanding SOA

Dan North published a splendid article explaining SOA by describing a 1950 business scenario and then translating it into technology-agnostic SOA terms.

A PDF can be found here. In this PDF Dan comes up with a few tips:

DO

1. Have a user

- Embedded in the business (he should not be a technical person)

- Who cares about the outcome

3. Remember that calling across the network takes time

4. Use coarse-grained services rather the a lot of little calls

Don't

1. Design for what you don't need

- Does it really need to be available 99.999% of the time?

- This includes security, availability - in fact all the “-ilities”

- Better still, don’t expose them as services in the first place

4. Expose your privates

- In other words, avoid putting implementation details into the message

- Instead package all the calls into a single, coarse-grained service call

Monday, September 24, 2007

SOA: distributed concept for business-IT alignment

Service Oriented Architecture has a business-perspective and an IT-perspective. Recognizing these two view-points makes SOA a means of business-IT alignment. BPM, BAM and business events are the key to business-IT alignment, as explained below.

Looking from the business side (top layer in the figure above), there is the decomposition of the business into interacting autonomous business functions. These functions offer services to each other and communicate - preferably - events based to obtain their autonomy. These service providers no longer focus only internally on the organization, but they are seeking for external markets to offer their services. To excel in a competitive market a high level of autonomy is required.

This is not an IT aspect, but purely business.

A top-down approach to decompose the business into autonomous business functions is offered by IBM's Component Business Modeling. The autonomous business functions can also be composed from concrete tasks analyzed in a bottom-up approach.

Composite applications: IT-oriented SOA

On the other hand there is the composition of application constructs. This is about reusable and sharable stateless components from an IT perspective (software components). The granularity of these functional components "goes to the bottom". It's just common modular and structured design and programming practice, originating from the 70's.

Business-IT alignment: BPM, BAM, business events

The top level of such an application construct preferably maps with autonomous business functions. Current standards based technologies make it possible for IT to support events based interaction between the autonomous business functions, to monitor these events and to align interacting business functions with supporting IT-components.

Business is aligned with IT by means of BPM (business process management). BPM supports the mapping of real life business functions including their mutual interactions to their IT software equivalents. At this layer business events are mapped to software messages and business activity can be monitored (BAM) by means of analyzing the corresponding software messages. So BPM, BAM and captured business events are the hinges between business and IT: they all have a business relevance and at the same time an IT relevance.

Location and technology virtualization: ESB

The Enterprise Service Bus (ESB) is a Web Services deployment platform that virtualizes different locations and different technologies. It supports:

- Mapping from the services at the composite applications model to software deployments by means of Service Component Architecture (SCA)

- Deployment of Web Services standards like XML, WSDL, SOAP and WS-*

- Asynchronous communications by means of underlying queuing mechanisms

- Distributed access to software components by means of distributed local presence of the ESB

From a distributed point of view, the ESB forms an intelligent layer on the network. At every place where software functionality must be unleashed by a network connection, an ESB access-point is present as a connector between the software component and the network services. The ESB decouples the local software components from the network. The distributed ESB access-points rely on the network services.

Connectivity: network

At the physical layer, connectivity is offered by the network in terms of domains, routing, switching, firewalls and wiring. Supporting services at the network layer are, among others: DHCP (host configuration), DSN (domain naming and indirection), SNMP (network management), SNTP (time services), etcetera.

The ESB makes local software components agnostic for connectivity at the network layer. This releases software implementation from connectivity details. Using the Web Services based ESB platform makes the configuration of the network a lot easier. Firewall rules and IP-connectivity focus on the generic local ESB access-points and not on the distinct ever changing application components anymore.

Locations

Software components as well as business functions are physically present at locations; not necessarily in a one-to-one relationship. Software components may be located in one or more data centers, while business may be located in moving trains. Multiple instances of software components as well as business functions may be implemented at one ore multiple locations. But also one instance may be stretched over multiple locations. Moreover multiple technologies may be implemented at the locations. Physical access to the systems is deployed at the locations where people are part of the business functions.

The network connects these locations and the ESB virtualizes these locations, including virtualization of technologies and (redundant) component deployments.

Conclusion

SOA is not a sequential initiative but a concurrent one at all levels.

It is modern business practice to look at the company in terms of services (non-IT). It is also modern systems development practice to look at software components in terms of services (something quite different from business services). BPM maps business services to software services. And finally it is modern infrastructure practice to virtualize locations and technologies in terms of Web Services. Not the one after the other, but concurrently...

Saturday, September 08, 2007

SOA and data

Nick Malik posted an article the other day titled: SOA drives an odd data model. As an SOA-architect you should read it. I recommend to read all of his postings as he has great insights. If there is one mandatory blog on the SOA-EDA subject to subscribe to, it is this one.

My own thoughts on the subject of SOA and data is (in short and generalized):

- Data should be modeled within the boundaries of a service. This principle helps in determining the right level of granularity of the services.

- Also data persistency should be organized within the boundaries of a service.

- This may lead to redundant data as an architectural principle, which is right to maintain independency.

- Event-driven architecture principles are at the basis of keeping the redundant data in sync.

Thursday, August 30, 2007

Thanks Udi

Listen to what Udi Dahan tells about my vision about the ESB evolution on Dr. Dobb's podcast. He talks about my posting ESB: Service Bus or Data Bus. Udi thinks it will take about 5 years or so before my prediction comes true. I take that as a compliment on my vision.

Thanks for spending time on my thoughts, Udi.

Friday, August 24, 2007

Asynchronous and Synchronous: keep it simple

Pat Helland wrote in a recent post: Asynchronous and Synchronous are Subjective Terms.

Pat says that one communication line may be synchronous AND asynchronous depending on which "with respect to". That's the trick: it depends on your viewpoint like every observation in life depends on your viewpoint. I would say: Keep It Simple. Choose a viewpoint and then ask yourself: Do I wait for an answer? Yes: synchronous. No, I am done: asynchronous. If it's about one single communication stack: first choose the layer, then decide.

It drives Pat kinda nuts - as he says himself - when he hears somebody say "such-and-so is asynchronous"... or synchronous. The opposite applies to me: if somebody can't tell me whether his communication is synchronous or asynchronous I push him to the end to get it out. Because it matters to the design...

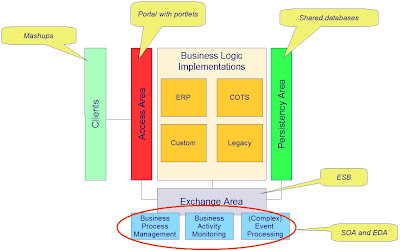

What is EAI?

What is Enterprise Application Integration or EAI? Application Integration is – ironically – about application division. This article explains how applications are divided into separated components and how the components are glued together. Several “glue-areas” are recognized where mashups, portals, shared databases, SOA and EDA all play a role in gluing the pieces together.

In this article several steps are described to finally reach the “integrated application”. These steps however are not meant as a logical sequence. In practice these steps will evolve concurrently over time, led by architectural directions and roadmaps.

Let us start with “the chaos”.

Context

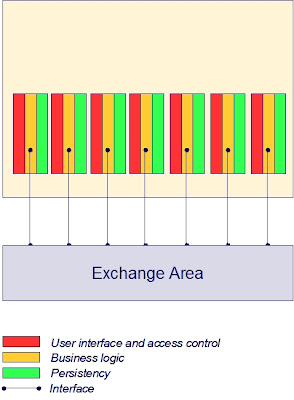

Many current application landscapes consist of an organically grown collection of monolithic applications that interact by many different application- and technology-specific interfaces. All these applications have their own implementations of access control, user interfacing, persistency and business process control. Interface control is merged with business rules within the different applications, representing the overall business processes.

This leads to:

- Complex and long lasting maintenance efforts

- Data inconsistency

- Error-prone interface maintenance because of many (sometimes unknown) dependencies

- Exponential growth of complexity when extending the system

What is one thing we can do to bring order in the chaos? The answer is: strip the applications down to core business rules implementations by externalizing shared areas for exchange, access and persistency.

Externalize shared Exchange Area from applications

Bringing the interface control outside the application into a shared facility to be used by all applications results in uniformity of message exchange. Generic data transformation and routing services offer a layer of indirection between the communicating applications, which makes the applications less dependent on knowledge of each others data formats, technologies, addressing and location. In this layer generic facilities may be implemented to secure data transport in a standards based and sophisticated way, without any impact on the applications themselves.

Another huge advantage is that (changes of) process definitions can be accomplished much easier. Business processes that involve more than one application are established in the interface control mechanisms. Taking these mechanisms outside the applications allows for a more flexible configurable solution. This principle, combined with a design style to break down the business logic within an application into well defined components is the basis for BPM (Business Process Management).

The effects of externalizing an exchange area are:

- Less maintenance efforts

- More speed and flexibility in changing business processes

- Uniformity of management

- Linear growth of complexity when extending the system

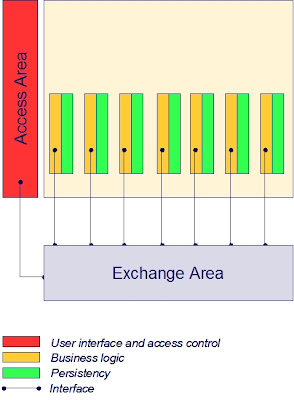

Externalize shared Access Area from applications

Bringing user interface and access control from the individual applications to a generic facility makes the application:

- Independent from user devices

- Independent from user location

- Independent from user interface

- Independent from application access control

This leads to:

- Less maintenance efforts when changing overall presentation

- Uniformity and consistency of presentation

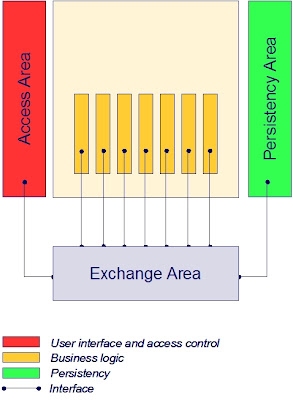

Externalize shared Persistency Area from applications

This externalization principle is very common for decades already: get all the fine grained data persistency logic out of the application and use shared database services. The persistency area offers services for:

- Virtualization

- Real-time data synchronization

- Data warehouses

- Recovery

- Meta data management

- Data more actual (real-time synchronization)

- Consistency of data (synchronization, data warehouse)

- Less database management (recovery, meta model)

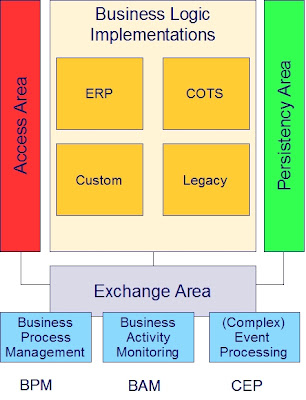

Virtual one single integrated “application”

Externalizing these three shared areas from the applications leaves a fourth area where the implementation of business logic resides. Business logic may be implemented by ERP, COTS, custom code and legacy. These concrete ways to deliver business logic don’t exclude each other, but overlap (e.g. the greater part of legacy will mostly be custom code).

Finally this architectural design leads to virtual one big integrated enterprise application.

Once in place, opportunities grow. In particular the exchange area is interesting. When business logic implementations are broken down to adequately sized and designed components, the exchange area will enable standards based BPM, BAM and CEP:

Once in place, opportunities grow. In particular the exchange area is interesting. When business logic implementations are broken down to adequately sized and designed components, the exchange area will enable standards based BPM, BAM and CEP:- Business Process Management: Flexible business process definition/maintenance

- Business Activity Monitoring: Real-time view on operational business state

- Event processing: React proactively to potential bottlenecks; correlate detected events to generate new events

Mashups: integration at the client side

There is however one more aspect to application integration at the user interface layer. And that is the clients. Portals facilitate integration at the server side. At the client side (e.g. the web browser) new technologies are introduced based on Javascript and AJAX. Integration at the client side of the user interface are called a mashup. Mashups bring applications together in the browser while portals bring applications together with the help of portlets on the server.

The glue

The externalized areas can all be characterized as Enterprise Application Integration, each of which is very different from the other in nature. The areas each offer their own kind of services to glue the components together.

The multiple faces of Enterprise Application Integration recapitulated:

- Integration at the data layer: the glue is shared databases, DBMS, data warehouse

- Integration at the business logic layer : the glue is ESB, SOA, EDA

- Integration at the user interface layer (server side): the glue is portlets in portals

- Integration at the user interface layer (client side): the glue is mashups

Friday, August 17, 2007

SOA-selling battle goes on in blogosphere

Mike Kavis commented on my post "Business doesn't ask for SOA". He says it pains him to see my article. Mike refers to an article of himself. I enjoyed reading his article; IF I were to sell SOA to the business I would have very much faith in his approach.

Of course SOA will help business a lot forward. Enabling BPM is a good example. The point however is: should it matter to us - from an IT point of view - that the business understands SOA? WHY should they understand? From a business perspective you might say: it is important to know how to "service orient" the organization. And then again: should IT folks tell the business folks they are currently not modeling and organizing their processes correctly? That they should service orient their business? I think business people - in general - now very well how to organize their business; and they must be free not to service orient... while at the same time IT does. It's two worlds.

If IT-funding is the reason to convince the business of SOA: that is not the right direction. I think it should be an IT-investment to put IT-things in place and it must not be a business investment. IT must not be depended from the business in innovating their own shop and putting application infrastructure in place. If you think differently, I guess you don't understand service orientation, because - funny enough - such an independent IT-shop is a perfect example of what is meant with service orientation, from an business point of view! If this all gets a bit confusing to you, you might read my posting about the two worlds of SOA.

Selling SOA to the business is a waste of time

Are you still selling SOA to your business? You better stop it, because you are wasting your time! And I really know what I am talking about.

As Nick Malik (Microsoft) says:

- We don't discuss SOA with the business because we don't discuss professionalism or intelligence with our business... it is assumed and required that we behave with best practices and bring the best available design. That includes SOA.

Saturday, August 11, 2007

Bringing SOA to Life

I came across this video-presentation on InfoQ. Mikkel Hippe Brun, Chief Consultant at Danish National IT and Telecom Agency, introduces Denmark's national Service Oriented Infrastructure.

The title of this posting is a bit misleading, because it is not really about SOA in the way we currently define SOA (huh...?); it is about web services. But by all means it is a highly interesting use case Mikkel Hippe Brun is talking about. The Danes have built a public web services infrastructure based on replicated UDDI registries. The architecture is founded on standards based:

- Address Resolution

- Reliable Messaging

- Message Level Security

- Signature

The architectural overview is very well described in this document which can be found among some other interesting architecture documents on their web-site.

I highly recommend watching this presentation and reading the architectural description.

Why? Because this innovative use case shows crystal clear the need for web service technologies - in the current spirit of the age - and how these technologies will change the way we do business. It shows how technology pushes business: you won't get any invoice paid by the Danish government, unless delivered electronically. This is mandated by law. I think this is the ultimate way to drive innovation. Thrilling...!

Monday, August 06, 2007

The big mistake about EDA

I frequently get questions of people who present me a request-reply scenario and ask me why they for God sake should implement this in an asynchronous way. They come up with all the (performance related) disadvantages of such an implementation. And finally they conclude that EDA may be not such a good idea, looking at me with a big question mark in their eyes.

These people all make one big mistake: they equate the asynchronous implementation pattern to EDA.

It is true that EDA leads to asynchronous patterns, but that doesn't mean that asynchronous patterns are equal to EDA.

EDA is about scenario design, not about scenario implementation. I think EDA as being a business event focused design approach. To me EDA means to start the grand design from recognizing in you business the events that your business planned to react upon. Forget at this level about IT-implementation, but just focus on the event definition. The initial event definition may be deduced from what you currently need to know to appropriately react on this event. Here comes the consuming service(s) to the scene. Next you define the process that detects the event. Here comes the producing service(s) to the scene. The craftsmanship of the designer (or team) is in defining the business event in such a way that it not only fits the current requirements, but also future requirements and out-of-scope usage (event representation reuse in other contexts). This can be accomplished by focusing on the characteristics of the event itself - in impartial isolation - when defining the event, and not on the current known consuming services. The currently foreseen consuming services are only of any help to get to an initial start of defining events.

When you come to define the event-producing and -consuming services you are diving into a more detailed layer of abstraction. It is very well possible (and desirable) to continue the EDA design approach at that level and all lower levels. Depending, however, on the level of abstraction you will start to recognize stronger cohesion in terms of ACID-transactions and dialogs. The deeper you dive, the stronger the cohesion will be. This means, even while still at a functional level, you will start recognizing request-reply mechanisms. This is the level where the big mistake originates from. Implementing such request-reply mechanisms in an asynchronous way may offer advantages with regard to required mediation of the messages and loosely coupled addressing, but it will always be a trade-off with performance issues and available middle-ware infrastructure. Mind that this has nothing to do with EDA.

Friday, August 03, 2007

BPM versus SOA

There is a debate going on about the relationship between BPM (business process management) and SOA (service oriented architecture). Joe McKendrick reports on part of this debate. Also James Taylor adds a coin. Nick Malik plays his role as well and there are more.

To be honest, I never realized this subject as being ambiguous. But now I do. I love to join the debate.

- Isn't it fair to state that BPM is about a business process model and SOA about a way to abstract business functions (or process steps; sorry Steve, it's a matter of definition) from implementation?

- Isn't it fair to state that BPM is about the top level layer (business view) of inter-related composite structures?

- Isn't it fair to state the composite structures are modeled by SOA?

- Isn't it fair to state that BPM has a horizontal scope and SOA a vertical scope?

- Isn't it fair to state that SOA is the link between the business view (top level layer) and the IT-view (sub level layers)?

I hope this posting is a valuable contribution to the BPM-SOA debate.

The Role of Event Processing in Modern Business

Recently I published some patterns with regard to event related implementations. Among others:

- How to implement a loosely coupled process flow (EDA)

- How to implement Business Activity Monitoring in an SOA

- How to mediate semantics in an EDA

If you are interested in EDA then you really have to read it...

Wednesday, August 01, 2007

How to implement identity based Service Access Control in SOA

Identity

In consequence of the growing openness of IT-infrastructure (Internet, SaaS, public services, remote access, Web 2.0), security based on fortresses (isolated environments) is becoming less adequate. Secondly there is an awareness that security incidents are not only caused from outside these isolated environments, but also from inside such an environment. These aspects together with the introduction of compliancy regulations (be able to quickly proof who did what and when, on penalty of legal sanctions) a trend is recognized toward identity management based security mechanisms. These mechanisms are based on the idea that all components in an IT-environment (people, devices, software) that can act more or less autonomous have an identity. Access to resources is controlled at the resource-level and is based on the identity credentials of the user of that resource.

Service Access

This pattern describes an identity based solution for protecting services against undesired use. Without protection every arbitrary service can call any other arbitrary service. This raises risks with regard to intended and unintended violations of the system. Also with regard to compliancy regulations (knowing who did what and when) such a situation is undesirable. These risks are present even in a fire-wall secured environment.

The solution described in this pattern assumes the availability of a security infrastructure based on identity management.

When a service is called by a caller (service access) the identity credentials of the caller are added to the message. The called service can only be accessed via a proxy (security proxy). This proxy will validate the access rights of the caller based on the credentials passed in the message and the defined access policies before passing the message to the called service. In case of a synchronous communication implementation the identity credentials of the called services are added to the reply to allow the caller to validate the integrity of the reply.

This model supports authentication and authorization of service calls:

- Identity Credentials: proof that a service is who it claims to be (authentication)

- Policies: managing what service may be called by whom (authorization)

By establishing trust relations with external companies, secured service access can be offered to external or federated parties without the need to maintain the external identities; only the (e.g. role based) policies have to be maintained (relying on the adequate role allocation by the trusted party).

Identity Credentials Propagation Trail

The service-access and security-proxy combinations can be chained together. This allows for complex access policies based on a complete chain of identity credentials. For example:

- If D is called by C and C was called by A: access allowed

- If D is called by C and C was called by B: access denied

Anti-pattern: security-agent nested in the service

There are products available that offer comparable solutions where a security agent is built into the service in stead of using an external security proxy. Such a solution may be qualified as an anti-pattern. Mixing up security components with the service components decreases flexibility and increases vendor lock-ins. An infrastructure based on embedded agents leads to a load of extra efforts to change the services when you want to choose another vendor in future; proxies can easily be replaced without any effect on the services.

Identity Credentials in Business Events

Beside passing identity credentials to services (SOA), identity credentials can also be added to published business events (EDA). This allows for the consumer of the message to validate the identity of the source of the message. A trail of identity credentials may be added to the published messages in a process flow to allow for determination of the process flow route, based on the identities of the services in the flow. The figure below illustrates the pattern of identity credentials in a business event.

Saturday, June 30, 2007

ESB: Service Bus or Data Bus?

The ESB is a lot about messaging and therefore a better name perhaps would be "Enterprise Data Bus". It's the asynchronous messaging that needs such an infrastructure with persistency- and mediation facilities. All the WS-* standards are about messaging as well, leveraging the message itself to tell the infrastructure how the message has to be handled (see this). I think WS-* will make it possible to have the ESB evolve from a vendor-product to a concept implemented in the operating systems and network devices that understand WS-*. Then you can leave the prefix "Enterprise" and we will be ready for an universal asynchronous data bus over the Internet (or any other network you like). This will help breaking the current "services centric" idea of SOA into a "messages centric" perspective.

Friday, June 29, 2007

How to implement a loosely coupled process flow (EDA)

A process flow contains subsequent activities or steps. These may be manual or automated activities. In most cases an instance of a process flow uses a persistent document that holds the state of that process instance. This document contains data to be queried.

Figure 1 shows services 1 till 4 representing subsequent steps in a process flow. “Dossier” represents the collection of persistent documents holding the process states. Service X detects the state of the flow and updates the dossier.

Services that represent the flow are independent of the dossier

In this pattern a service (representing a business activity) results in a business event. The business event triggers the next service in the sequence by means of a subscription of this subsequent service to this event. Every step in the flow delivers (messages about) event types. All services in the flow are completely autonomous (stateless) in relation to one another and to the dossier. I.e. every service can complete its task fully based on the data stored in the message (modeled business event) it is subscribed to. Services 1 till 4 do not query the persistent dossier in behalf of the process flow; perhaps they do query the dossier for other reasons, but in essence they are completely decoupled from the dossier and they don’t need to know about the existence of the dossier.

The dossier persists in behalf of services that have an interest in the current state or historical states of the process. In general these will be other services than those forming the process flow.

Full contained messages

For example (see figure 1), to have service 3 perform its task, the message about event 2 will contain the full context of event 2. This may (and mostly will) mean that the data of event 2 will contain a lot of the data of event 1. This mechanism allows for connecting new services to the flow, without these services having any knowledge of the other services in the flow nor any knowledge of the persistent dossier.

Separate topic for every business event

So the messages about the different event types within one process flow may look much alike. They even may be identical, but it concerns different business event types and so have different semantics in the flow. That is why they always will be designed as separate message types (topics) and not as one message type with a status code plumbed into it.

Anti-pattern

Using such a status code must be regarded as an anti-pattern. In such a solution, the service should subscribe based on the value of a artificial added field in the content of the message. This implies knowledge of the existence, values, semantics and format of this field when subscribing services to business events and also when building the publishing services. This increases complexity and decreases robustness of the system.

By distinguishing different event types, even if their contents and formats are equal, no specific semantic nor syntactic knowledge about the content of the message is needed to subscribe services to events.

Declarative process definition

A loosely coupled process flow as clarified in this pattern allows for declarative process definitions. The process flow is defined by first defining the subsequent business events and then defining which services consume and publish these events. A strong system design contains declarative descriptions of all the process flows within the system. Figure 2 shows an example of the process flow as depicted in figure 1.

Conditional branches in the flow (see figure 3), like If-then-else or Do-case constructs, are implemented by content based subscriptions. These conditions are formal decisions based on business rules where these business rules may be very complex constructs. (here I enter the domain of my friend James Taylor)

The conditions are not defined within the services, but outside the services. This allows for easy changes by reconfiguring the publish-and-subscribe mechanism without modifications to the service-implementations. The services will be more robust by retaining their pure functions.

Figures 4 and 5 illustrate how to add additional business rules to the process flow.

The process definition makes use of the results of the applied business rules which are defined separately from the process flow definition.

Implementation by a notification server

One might think of a notification server to trigger services by events at run time. The conditions are the result of business rules-based filters on the published events that the notification servers evaluates to trigger the correct services. So the notification server should have access to a repository that holds the declarative process definitions and a repository that holds the business rules.

One might also think of the implementation of service X and the persistent dossier (see figure 1) by the notification server. If the notification server logs all events and the repositories hold history logs of the process definitions and business rules, it will be possible to derive all process states and decisions for any moment in time from these three sources. This construction can support very rich business intelligence.

Why?

This pattern makes it easy to implement process flows by declarative configurations and to change the flows on an ad hoc basis, also by configuration.

Because the process flow is defined in terms of publish/subscribe and autonomous stateless services, it becomes easy to add, change and remove steps and branches in a plug-and-play alike way, without breaking the system.

Based on this pattern, complex processes can be accomplished that - despite their complexity - are yet easy to change.

Saturday, June 23, 2007

Things you always wanted to know about EDA

Great video presentation in which Ian Cartwright presents some of his work (developed with Martin Fowler) on Event Patterns.

If you want to get a grip on EDA, then watch this video. Ian explains some interesting patterns, which may open your eyes.

Really, really good stuff!!

Tuesday, June 19, 2007

There is no one-size-fits-all approach to SOA

Are you seeking for that one most suitable approach to SOA? An approach that covers all your needs, respecting (the lack of) your company's IT governance, the (im)maturity of the IT staf, your heterogenous application landscape, the (lack of) innovation forces (including budget)? You won't find it.

Some projects will focus on new software for old processes, and others on new processes with old software. Or both new processes and new software (lucky you!). Or old processes with old software (bug fixing, poor you!). And then there are proprietary SAP-solutions and eSOA-based SAP-solutions and combinations of proprietary and eSOA-based SAP-solutions. Some ownerships are crystal clear, others are political disasters. Managers may not support innovation, looking forward to the bottle of champagne at the date of the deadline. Others don't like champagne, but may or may not be very stubborn on architectures they are not educated in to understand. Developers may or may not be averse to things that are new to them. Every project has its own context characteristics with regard to people, knowledge, motivation, business and technology. Not all projects are ready for new things; if you have bad luck, MOST projects are not ready.

And all of this within one single company or even worse: within one big project. So, no, you won't find that ultimate SOA approach of which the guru's let you know to put all the right things in place as a prerequisite to the success of SOA. You just can't get all the right things in place. That's not our world and will never be our world. We will have to deal with chaos. Also when in comes to SOA approaches. Take it or leave it.

But what can you do to succeed in SOA? Well, my suggestion is:

Stop trying to convince averse project teams and averse or cynical managers of the benifits of SOA. But look for curious innovative managers with charisma and seek to empower your project teams with passionated new age developers; no matter the technological or business context. And then start doing the things you think (know) you should do with an approach that fits the project context. That's how to step-by-step contribute to SOA in the context of chaos. And you don't even have to mention the word SOA. It's the teams respected - and trusted - craftsmanship, that leads to SOA and to the company's SOA-heroes of the future.

Monday, June 18, 2007

Zachmann versus Zachman

Quoted from Zachmann (beware!):

- SOA is a plausibly good and sometimes even useful idea that has been blown far out of proportion by various parties with their own axes to grind.

- By all means, include real SOA in your range of options. If you get the chance, enjoy the cocktails and the golf! Don't, however, let yourself be beguiled into believing that it's really anything different from what you can already do quite well using Web services with .NET. It most certainly is not.

- SOA simply means designing application systems that create and access local or network services

Okay, fair enough, he is right in: The basic architectural ideas date back to the '60s.

But thank goodness it is not Zachman with single N, author of The Zachman Framework for Enterprise Architecture, but Zachmann with double N who wrote the rest of this joke equating SOA with .NET web services.

In one of my previous postings I concluded that developers - in general - don't get the clue of SOA... See what I mean?

Wednesday, June 13, 2007

Canonical Model, Canonical Schema, and Event Driven SOA

Nick Malik just posted a great article on the importance of:

- Enterprise Canonical Data Model

- Canonical Message Schema

- Event Driven Architecture

- Business Event Ontology

My advise is: don't even think about doing SOA without fully understanding Nick's article.

Great Nick, you have all my credits.

The magical A of SOA and EDA

We are all talking about SOA and EDA, trying to explain what services are and – a bit less intensive – what events are. But what is the A in SOA and EDA? What is architecture at all?

There are formal definitions (IEEE 1471 for software architecture) and less formal definitions of architecture. Many books and articles are written about the subject architecture.

In my lingo architecture means "purposeful composition". This implies:

- Components

- Separation

- Arrangement

- Connections

- Purpose

The enterprise may be your scope of the architecture with regard to SOA and EDA. But be careful with enterprise level IT architecture. The enterprise IT is fading into a bigger global IT in the context of Internet, SaaS, Service Orientation, outsourcing, off-shoring, and so on. All kids in the street are participating with their own LEGO blocks to build your castle.

One of the purposes of IT architecture at the enterprise level is the ability to follow changes in the context. Not only in the business context, but also in the technology context. So the components must be defined and connected in a way that makes it possible to deconnect them and reconnect them in other arrangements and other contexts. This puts constraints on the components and here is where autonomy based on strong cohesion and loose coupling comes in place.

SOA is an architectural style the recognizes services (functionality representing process steps) as components. EDA is an architectural style that recognizes events (messages representing process states) as components. Both styles focus magically on the same architecture from an inverse viewpoint. The components in EDA are strongly related to the component connections in SOA; events connect services by transferring process state from one service that detects and publishes events to other services that are triggered by specific events. On the other hand the components of SOA are strongly related to the component connections in EDA; services connect events by transferring the process from one state to another; a service triggered by an event may cause a new event bringing the process in a subsequent state. The two main purposes of both SOA and EDA are (among others):

- Support ease in rearranging the components (commonly known as the LEGO metaphor)

- Support concurrent use of components in different contexts (commonly known as "reuse")

SOA prescribes constraints on the services to fulfill these purposes (autonomy, strong cohesion, statelessness). EDA prescribes constraints on the messages to fulfill these purposes (loose coupling, canonical messages with self contained context). SOA can hold synchronous patterns as well as asynchronous patterns. As EDA prescribes an asynchronous pattern, EDA not only puts constraints on the messages, but on the interacting services as well (publish/subscribe, deliver and consume full contained messages). Note that in consequence EDA puts constraints on SOA, but SOA not on EDA. From this perspective EDA might be seen as of a higher architectural magnitude then SOA.

This does not improve business processes themselves, but it improves support for adaptability of business processes; so it also supports changing lousy processes from bad to worse. Ultimately business processes may be changed without any IT changes.

It is like painting a GUI-screen where the buttons, entry-fields and display-fields may be reshuffled over and over again to the most ugly and poor screen design, without breaking the under laying functional support. Just as easy as that.

Why is screen-printing so easy?

- Because functionality of the screen objects is based on stateless autonomous functions (reusable in different context)

- Because interaction between the screen objects is based on self contained messages representing the state of the screen (button_pressed, mouse_clicked, field_entered)

- Because the screen objects are configurable by the user to the user’s needs and wishes (color, position, size, values)

I think I am a daredevil...!

Saturday, June 02, 2007

SOA is not Business Agility but offers Business Agility

Is it the task of the CIO or any other IT-guy to convince the business people the "service orient" their processes? I don't think so. I think it is the task of IT-folks to organise and architect IT in such a way that it can quickly follow changes in the business context, or even better: to be agnostic for changes in the business context. It is the responsibility of business people to optimize their processes and organisation structures with the freedom for whatever reason not to do so.

I think, from an IT point of view, that SOA should offer agility to the business. Business must be able to change without headaches about IT. I think a well designed SOA even supports a badly structured organisation and clumsy business processes. That is the power of SOA, IMHO.

Friday, June 01, 2007

How to implement Business Activity Monitoring in an SOA

Essence of this pattern is that services that deliver data to be monitored are decoupled from the monitoring functionality. The responsibility of the services is limited to publishing the relevant data. In a well designed loosely coupled system, this data will probably already be available as in such a design autonomous services exchange business events in a formal format to establish process flows. The services do not have any knowledge of the (existence) of the monitoring functionality. You might say in a correctly designed EDA based system, a properly configured business activity monitor can be plugged-in on the fly.

The monitor consists in its simplest form of event processing components and a dashboard for publishing alerts as depicted in the figure above.

- Event correlation: correlate desperate event types and their occurrences based on configurable rules concerning value-ranges, comparisons and time-frames.

- Event processing: additional processing to enrich the data delivered by the event messages.

The event processor publishes alert events based on the correlation and processing results. A monitor dashboard subscribes to these event types and prepares them for publishing via a portal.

This is a very simplified representation of BAM functionality. BAM tools may offer extended functionality like persistency, analyzing, replay, multi-channel presentation, etc. Reporting via a dashboard is only one of the possibilities to present alerts. Custom services, email services or datawarehouses may also gain benefits of the published alert events; plug-and-play. An alert event is a special type of business event.

Example

A service in an ordering process publishes a message about the event of a customer placing an order. It is the company's policy to deliver within two days after ordering. If the delivery is delayed, the customer gets a discount of 10%. The idea behind this policy is that no customers will be lost after a delivery delay; some customers will even prefer a delay.

To monitor this process the "order placed" event is correlated with the "order delivered" event, which comes from a service at the expedition department (or an external company in case of outsourced expedition services). The occurrences of both event types are correlated based on the ordernumber. If an "order delivered" event is not detected within two days after an "order placed" event, an alert event is generated. A service of the financial department is subscribed to these event types. This service initiates a rebate for the concerning customer. Also a dashboard of the expedition department is subscribed to these event types to notify the employee on duty of the delay in order to have him take action and notify the customer. (A phone call is more customer-intimate then a generated email.)

It is easy (and better) to reconfigure the two-day time-frame in this example to an one-day time-frame and take corrective actions before the delay occurs.

This simplified example shows to power of events based systems in combination with CEP and BAM. Mature CEP and BAM tools are available on the market, but for an experienced development department it is no big deal to home-build this kind of functionality for systems that at this level of abstraction are based on EDA.

Why?

- This pattern decouples the process flow from the monitoring functionality. By using this mechanism the process flow as well as the monitoring functionality can be changed independently, without affecting each other.

- By using this mechanism the monitoring functionality does not influence the performance of the business process in any way.

- By using currently available standards based CEP and BAM products a uniform generic facility can be implemented, which reduces costs and maintenance in comparison to proprietary and/or more tightly coupled system specific monitoring solutions.

Friday, May 25, 2007

The two worlds of SOA

Nick Malik posted a reaction on the idea that entity services are anti-patterns. I agree with his conclusion that it depends on where you stand.

Looking from the business side, there is the composition of business activities into autonomous business functions. These functions offer services to each other and communicate - preferably - events based to obtain their autonomy. Such a business service could easily be outsourced without any disruption of the overall business process. Such a business service can also easily be delivered (shared) to different business contexts (internal or external). I call this the Service Oriented Enterprise. This has nothing to do with IT.

On the other hand there is the composition of application constructs. The top level of such a construct preferably maps with an autonomous business function. Current standards based technologies make it possible for IT to support events based interaction between the autonomous business functions, which in turn boosts the Service Oriented Enterprise.

The idea (or pattern, if you like) of autonomous business functions can be prolongated into the finer grained application constructs which may e.g. lead to autonomous entity services or deeper layers of granularity.

See also:

So: No, entity services are not an anti-pattern.

Wednesday, May 23, 2007

Business doesn't ask for SOA

Testimony from practice: Up till now, at this very moment in time, I didn't meet one single business manager who begged me to please deliver him an SOA-based solution. They are running after deadlines and project-cost reductions, but not after SOA. They are even cynical about SOA as being yet another promise after all those previous ones. Of course all those previous promises were a step ahead, just as SOA is. And I believe SOA is a big step ahead. But our business people don't want SOA's, they want flexible and cheap solutions. And they want I.T. not to be a big hurdle when they change the way they happen to do things. But they definitely did not ask me for an SOA.

And another testimony from practice: Developers - in general - don't get the clue of SOA. The marketeers of most software companies evangelize their ability to build service oriented systems. But the people who really have to deal with the details are not educated to get a grip on the practical use of the principles of autonomous business functions, loose coupling and asynchronous design. They are trained to use the modelling tools of Websphere, Sonic and Tibco, but SOA is not their mind-set. It's the individual passionated nerds we have to rely on, not the average developer or designer.

But fortunately I can also testify about curious development teams that are slowly adopting the new wave. Development teams that operate very close to the business in a very rapidly ever changing commercial context. These are the people I enjoy working with.

And another good sign is that the builders of packaged software - like SAP - not only evangelize SOA, but are really spending big efforts to service enable their legacy. Also the SOA initiatives of SaaS-providers like SalesForce are a great boost.

All together I believe it will take at least another 10 years or so before SOA becomes mainstream and common sense to all of us. And keep in mind that we are not hired to deliver SOA's to the business, but solutions. That's what they ask for.

Friday, May 18, 2007

Do we understand SOA?

I came across this posting of Galen Gruman, contributing editor at InfoWorld. He cites Roberto Medrano, executive vice president of SOA Software:

- "Maybe 20 percent of IT folks understand SOA and half of the rest think they do"

And then there is the widely spread misuse of some core terminology. "Experts" tend to speak of "reusable" services. But what they mean is "shareable" services, which is quite a different thing. It makes sense to focus on shareable messages and not only on shareable services. Did you ever hear somebody talk about "shareable messages", typically representing business events? I didn't, while the use of shareable messages really is the key to success. It's shared messages that make the innovation, not shared services.

And what about the idea of loose coupling related to SOA? I quote: "Shared services are disruptive, increasing dependencies and raising questions about what it means to own a service and who should own it." From a lower level IT-perspective this is such a very truth! However it doesn't seem to bother most of our "experts". Not to mention how and why exactly to "share" a service, if a service equates to a properly designed autonomous business function.

Wednesday, May 09, 2007

How to implement loosely coupled transaction processing

This pattern describes how to accomplish transaction processing within the context of autonomous services. The pattern is based on the idea that - by design - every action has a compensating action to undo the original action.

The autonomous services fulfill action requests from the transaction controller (see "1" in figure). If one or more services reply not to have been able to fulfill the request (see "2" in figure, fault feedback) then the transaction controller will send requests for compensating actions to the services that did fulfill the original requests (see "3" in figure).

The concerning services do not distinguish between a normal action and a compensating action. To the services compensating actions are normal actions like any other. The services are not even aware of being part of a transaction.

Examples

- A service is designed to make, change and cancel reservations for hotel rooms. These are three equivalent types of actions. In the context of compensating actions, a cancellation can be considered a compensation for making a reservation. This compensating action results in undoing the original make reservation action.

- A service is designed to increase or decrease the balance of an account. These are two equivalent actions. Both can be seen however as compensating actions for each other (mutual com-pensating actions). An action that increases the balance with 100 euros can be compensated with an action the decreases the balance with 10 euros.

Design considerations

The designer of the transaction system must for every action always design a compensating action. This is not trivial. Consider the second of the above mentioned examples. Suppose the service was not developed to increase or decrease an amount, but to replace the old amount with a new one. Compensation would then not be possible. If the action would replace the old amount with a new amount of 10 euros, this can not be reverted without endanger the integrity of the data. Not even if the transaction controller would be aware of the old amount (or if the old amount would have been returned to the transaction controller). Other amount changes (triggered by other sources) may have occurred after the replacement transaction has been executed. If afterwards a compensating action would replace the amount back to the original amount, the amount would be corrupted.

Why?

In situations where by nature the transaction processing cycle is long (minutes, hours, days, weeks, …) the described pattern of loosely coupled transaction processing allows for an adequate alternative to the well known two-phase-commit mechanism. Also in loosely coupled of decoupled environments (B2B) where minimal dependency (maximal autonomy) is pursued, this pattern offers a more appropriate solution then two-phase-commit.

Traditionally transaction systems use the two-phase-commit mechanism. All participating software components must support and adhere to this mechanism. All components reply whether or not they can honour the request of the transaction controller and - if so - retain the action until a commit- or rollback command has been received. A commit command is issued by the transaction controller as soon as all concerning components have replied that they made their preparations and are ready to commit. If one or more of the components deny the request, the transaction controller will send a roll-back command to the other components in order to allow them to release their retention state.

Because after a commit command the participating components must actual perform the action if they replied to be ready to commit, these components must - awaiting the commit - place locks on the data that is involved in the transaction. This puts a claim on the performance of the system. Such a mechanism is therefore only appropriate for (very) short running processes where a participating component can rely on the performance of the other participating components and on the performance of transaction controller.

If the processing time to complete the transaction takes relatively a long period of time, the inherent locking strategy of the two-phase-commit mechanism is not appropriate because it can hang the complete system. Also in loosely coupled of decoupled environments such as B2B the two-phase-commit mechanism puts undesirable dependencies on the environment and the proposed loosely coupled transaction processing will be more appropriate.